The autocorr function, without output arguments, produces an autocorrelogram of the residuals, and gives a quick visual take on the residual autocorrelation structure: Robustness of the estimates depends on the extent, or persistence, of the autocorrelations affecting current observations.

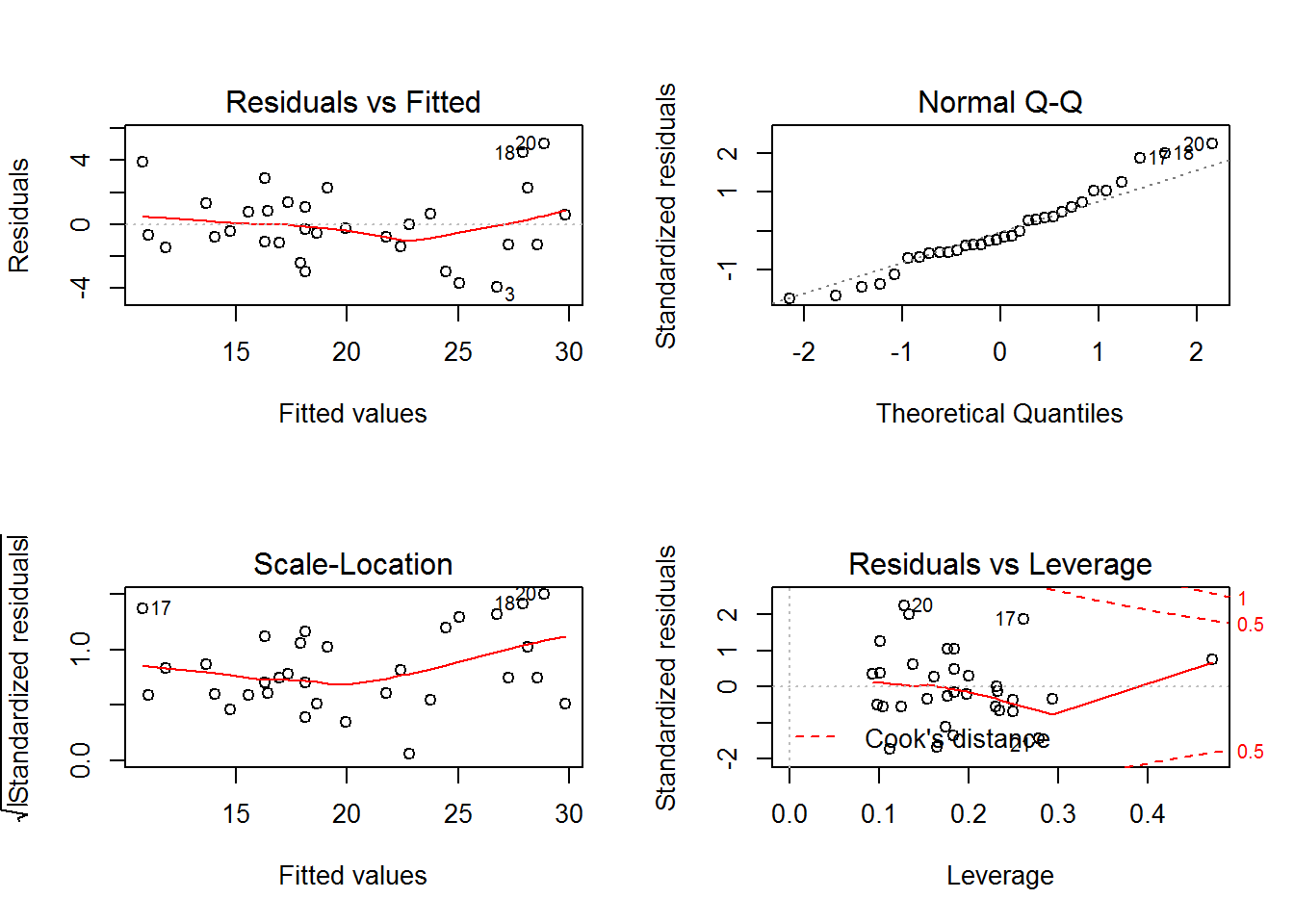

More conservative significance levels for the t-tests are advised. The result is that interval estimation and hypothesis testing become unreliable. Because autocorrelations in economic data are likely to be positive, reflecting similar random factors and omitted variables carried over from one time period to the next, variance estimates tend to be biased downward, toward t-tests with overly optimistic claims of accuracy. Compounding the problem, autocorrelation introduces bias into the standard variance estimates, even asymptotically. This is a significant problem in small samples, where confidence intervals will be relatively large. In the presence of autocorrelation, OLS estimates remain unbiased, but they no longer have minimum variance among unbiased estimators. A small amount of heteroscedasticity is also apparent, though it is difficult for a visual assessment to separate this from random variation in such a small sample. There appears to be some evidence of autocorrelation in several of the persistently positive or negative departures from the mean, particularly in the undifferenced data. The scale of the residuals is several orders of magnitude less than the scale of the original data (see the example Time Series Regression I: Linear Models), which is a sign that the models have captured a significant portion of the data-generating process (DGP). Evidence of model fit is assumed when 95% of the residuals are between 2 and -2.For each model, the residuals scatter around a mean near zero, as they should, with no obvious trends or patterns indicating misspecification. This curve is then compared to a survival function where the outcome has been modeled using a unit exponential distribution.* If the curves are similar, then model fit can be assumed.įinally, for Poisson regression, plot the standardized residuals on the y-axis against the expected rate of outcome on the x-axis. These residuals are then used as the time signature variable in a Kaplan-Meier curve predicting for the outcome. With Cox regression, Cox-Snell residuals should be calculated. If all models have a value close to " 0," then model fit can be assumed. Plot the raw residuals against the estimated outcomes for all models. Essentially, researchers choose a reference category within the categorical outcome or ordinal outcome and create " a-1" (where "a" is the number of independent categories or ordinal ranks in the outcome) logistic regression models and repeat residual analyses for each. The value should be close to zero," 0." This means that the predicted values are relatively similar to the observed values.Īssessing overall model fit with proportional odds regression and multinomial logistic regression is a tedious and time-consuming process. When assessing overall model fit (or error) of both multiple regression and logistic regression models, plot the raw residuals on the y-axis against the estimated outcomes on the x-axis. Residuals are essentially the difference (or error) between the observed value and the predicted value yielded from the model. All regression models will have some form of error when estimating outcomes. Model fit denotes the amount of error associated with predicting for an outcome. Residual analysis is important with regression because it provides you with a measure of model fit.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed